Health status index uuid pri rep unt leted store.size Your data should now be shipped to elasticsearch, by default under the filebeat-YYYY.mm.dd index pattern. You can have a look at the logs, should you need to debug: tail -f /var/log/filebeat/filebeat Then restart filebeat: $ /etc/init.d/filebeat restart Lets enable system (syslog, auth, etc) and nginx for our web server: $ filebeat modules enable systemĮxample of my /etc/filebeat/modules.d/system.yml configuration: - module: systemĮxample of my /etc/filebeat/modules.d/nginx.yml configuration: - module: nginx Filebeat Modulesįilebeat comes with modules that has context on specific applications like nginx, mysql etc. Open up /etc/filebeat/filebeat.yml and edit the following: filebeat.inputs:Ībove, just setting my path to nginx access logs, some extra fields, including that it shoulds seed kibana with example visualizations and the output configuration of elasticsearch. Let's configure our main configuration in filebeat, to specify our location where the data should be shipped to (in this case elasticsearch) and I will also like to set some extra fields that will apply to this specific server. Install Filebeat and enable the service on boot: $ apt install filebeat -y Update the repositories: $ apt update & apt upgrade -y Get the repository definition: $ echo "deb stable main" | tee -a /etc/apt//elastic-6.x.list Get the public signing key: $ wget -qO - | sudo apt-key add.

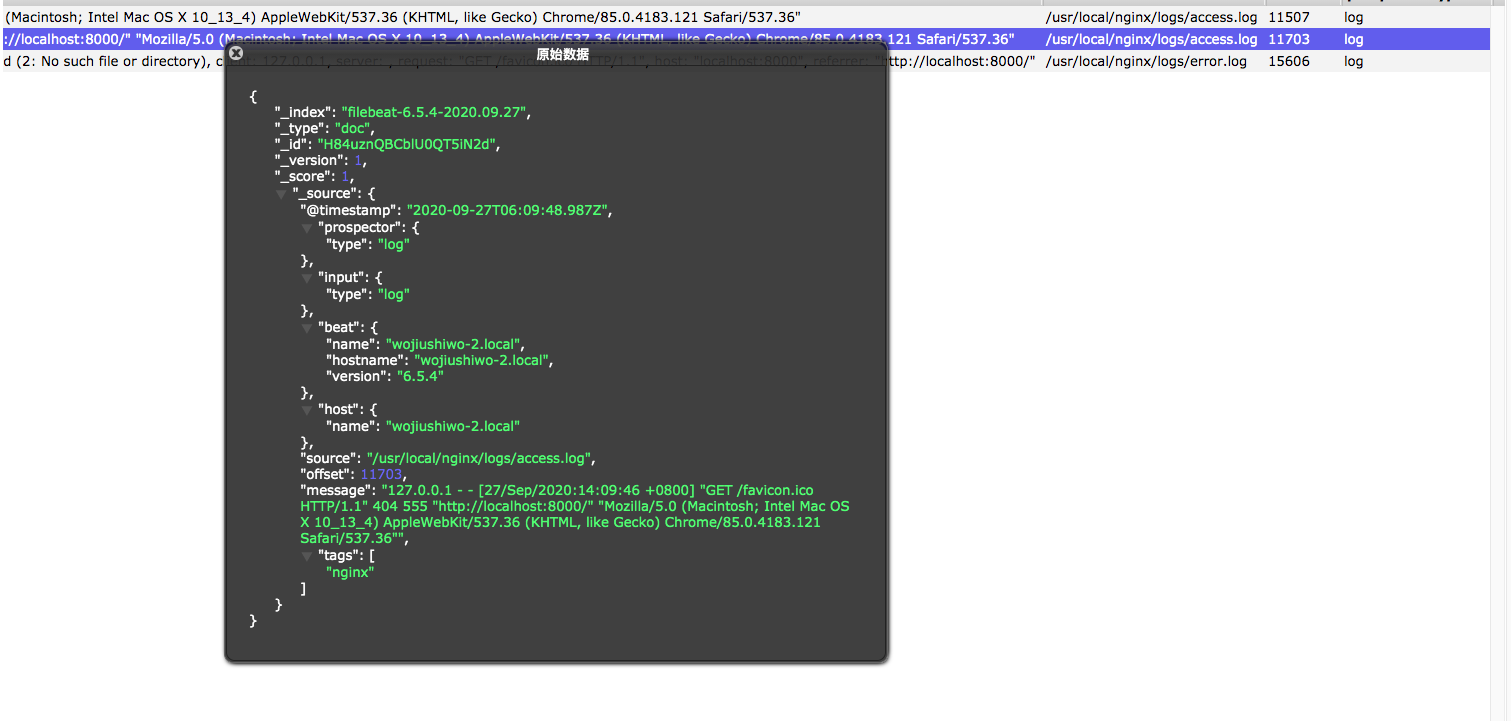

Install the dependencies: $ apt install wget apt-transport-https -y To check the version of your elasticsearch cluster: $ curl # i have es running locally I will be using version 6.7 as that will be the same version that I am running on my Elasticsearch. Filebeat Overviewįilebeat runs as agents, monitors your logs and ships them in response of events, or whenever the logfile receives data.īelow is a overview (credit: ) how Filebeat works Installing Filebeat Filbeat monitors the logfiles from the given configuration and ships the to the locations that is specified.

If bulk_max_size is reached before this interval expires, additional bulk index requests are made.Filebeat by Elastic is a lightweight log shipper, that ships your logs to Elastic products such as Elasticsearch and Logstash. This parameter defines the number of seconds to wait for new events between two bulk API index requests. The default is 50.Īdjust the value of flush_interval based on the actual requirements. This parameter defines the maximum number of events to bulk in a single Elasticsearch bulk API index request. Increase the value of bulk_max_size based on actual requirements. This parameter indicates the number of Elasticsearch clusters. Set the value of worker to the number of Elasticsearch clusters based on actual requirements. Optimize the parameters involved in output.elasticsearch in the filebeat.yml configuration file.After the idle_timeout is reached, the spooler is flushed regardless of whether the spool_size has been reached. This parameter defines how often the spooler is flushed. This parameter defines the number of log records that can be uploaded by the spooler at a time.Īdjust the value of filebeat.idle_timeout based on actual requirements. Increase the value of filebeat.spool_size based on actual requirements.

This parameter defines the buffer size used by every harvester. Increase the value of harvester_buffer_size based on actual requirements. Optimize the parameters involved in input of the filebeat.yml configuration file.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed